Quickstart guide

Get up and running with Loc.ai – from account registration to your first inference result.

This guide walks you through the complete journey: creating your account, registering a device, deploying a model, running inference, and viewing results.

The screenshots Q1–Q4 are the first four figures in order: account registration (Q1), device registration popup (Q2), generated install command (Q3), and deploy model dialog (Q4).

Prerequisites

Before you begin, ensure your system meets these requirements:

OS — Windows 10/11, macOS (Apple Silicon/Intel), or Linux (Ubuntu/Debian)

Python — Python 3.11+ (handled automatically by our installer)

Internet — Required to install dependencies and connect to the control plane

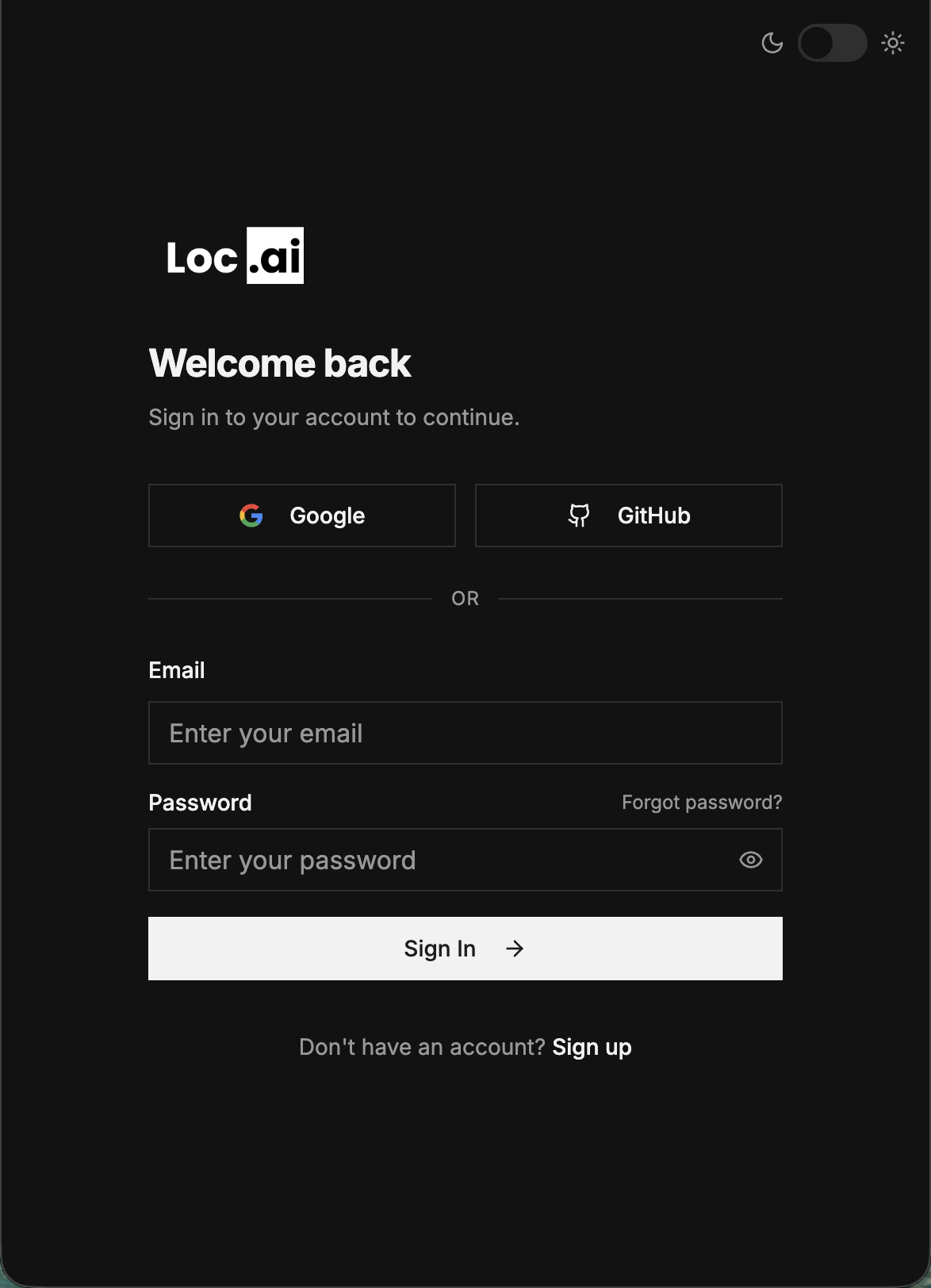

Register your account

To get started with Loc.ai, use the registration form at control.locai.co.uk.

- Navigate to the Loc.ai registration page

- Fill in your email, full name, and password

- Click Create account

- Verify your email if prompted, then sign in to the dashboard

Q1. Loc.ai registration form

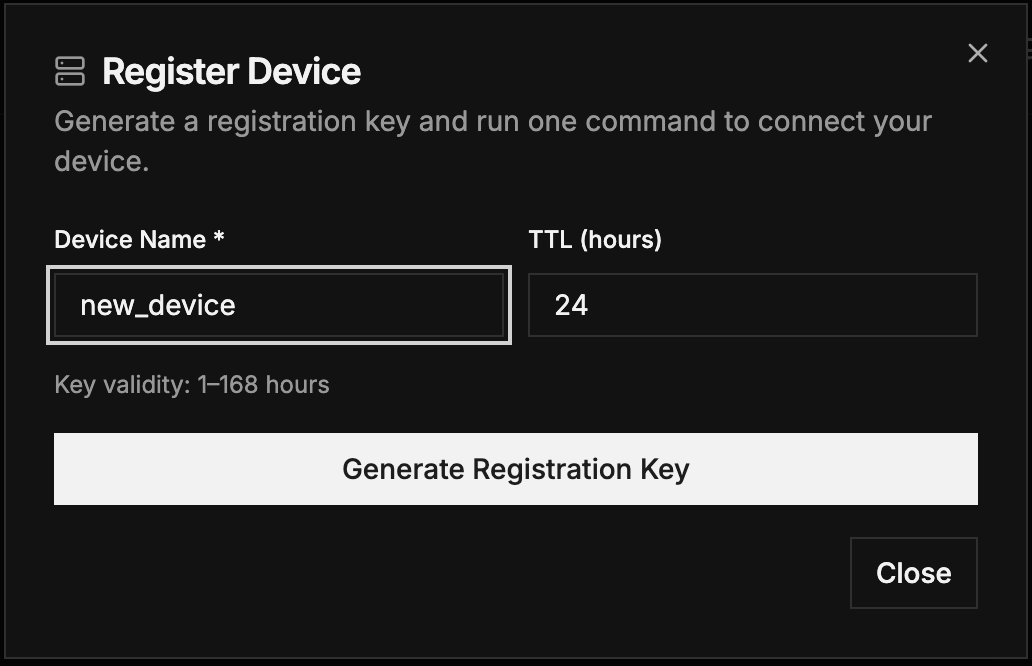

Register your first device

You can install Loc.ai:Link and register a device at the same time using the registration flow in the Control platform.

- Log in to the Loc.ai dashboard

- Click Register Device to open the registration popup

- Enter a device name and set a TTL (key validity, 1–168 hours)

- Click Generate Registration Key

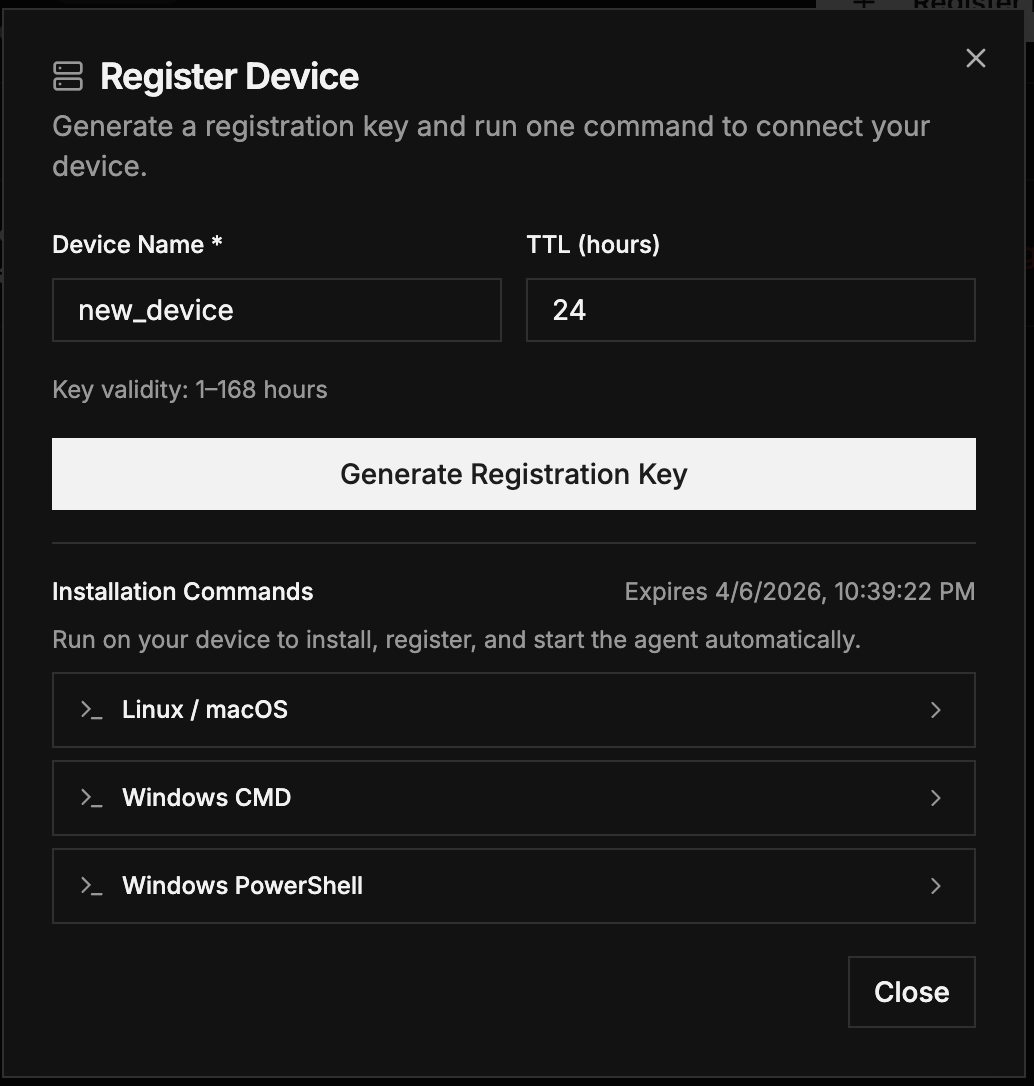

- Copy the generated curl command and paste it into the terminal of your remote device

Q2. Register Device popup – enter device name, TTL, and generate key

Q3. Generated one-line installation command ready to copy

The generated command automatically includes your device name, username, and registration key. Choose your preferred installation method below.

One-line installer

RecommendedThe fastest way to deploy

This single command handles dependency installation (uv), environment setup, device registration, and starts the agent automatically.

- Linux / macOS

- Windows

curl -sSL https://raw.githubusercontent.com/locai-co-uk/locai-link/main/install.sh | bash -s -- --device-name "YOUR_DEVICE_NAME" --username "YOUR_USERNAME" --registration-key "YOUR_KEY" --start-running

Command Prompt (CMD)

curl -LsSf https://raw.githubusercontent.com/locai-co-uk/locai-link/main/install.cmd -o install.cmd && install.cmd --device-name "YOUR_DEVICE_NAME" --username "YOUR_USERNAME" --registration-key "YOUR_KEY" --start-running

PowerShell

& ([scriptblock]::Create((irm https://raw.githubusercontent.com/locai-co-uk/locai-link/main/install.ps1))) -DeviceName "YOUR_DEVICE_NAME" -Username "YOUR_USERNAME" -RegistrationKey "YOUR_KEY" -StartRunning

Replace YOUR_DEVICE_NAME, YOUR_USERNAME, and YOUR_KEY with the credentials provided in your Loc.ai dashboard.

Install from source

AdvancedManual installation for development or customisation

Use this method if you need to modify the agent code, contribute to development, or require a specific version.

1. Get the code

Clone the repository or download the latest release.

git clone https://github.com/locai-co-uk/locai-link.git

cd locai-link

Or download the ZIP: Latest release

2. Setup environment

Use the install script for a wizard-like setup process:

- Linux / macOS

- Windows

chmod +x install.sh

./install.sh

.\install.ps1

Alternatively, set up via the manager directly:

python3 manager.py setup

# Or with uv (recommended)

uv run manager.py setup

3. Register device

Register the device using a key generated from the Loc.ai dashboard:

uv run manager.py register \

--device-name "my-edge-device-01" \

--username "my-username" \

--registration-key "YOUR_REG_KEY"

To reactivate an existing device, replace register with activate and provide your --device-id and --api-key.

4. Run the agent

uv run manager.py run

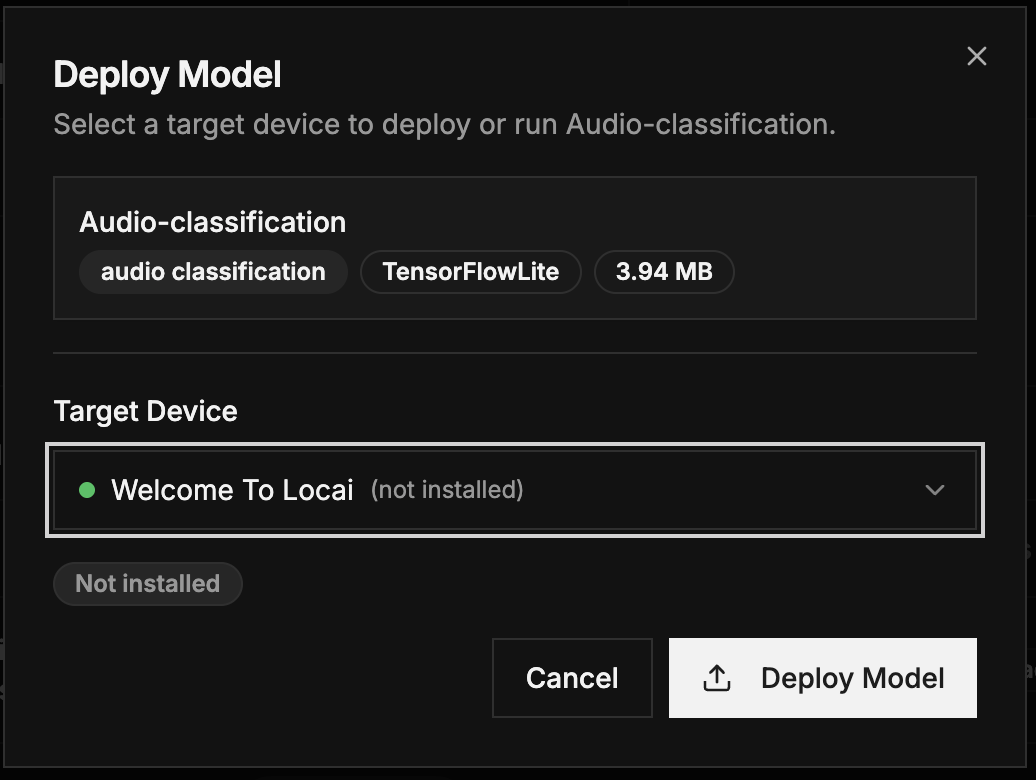

Deploy your first model

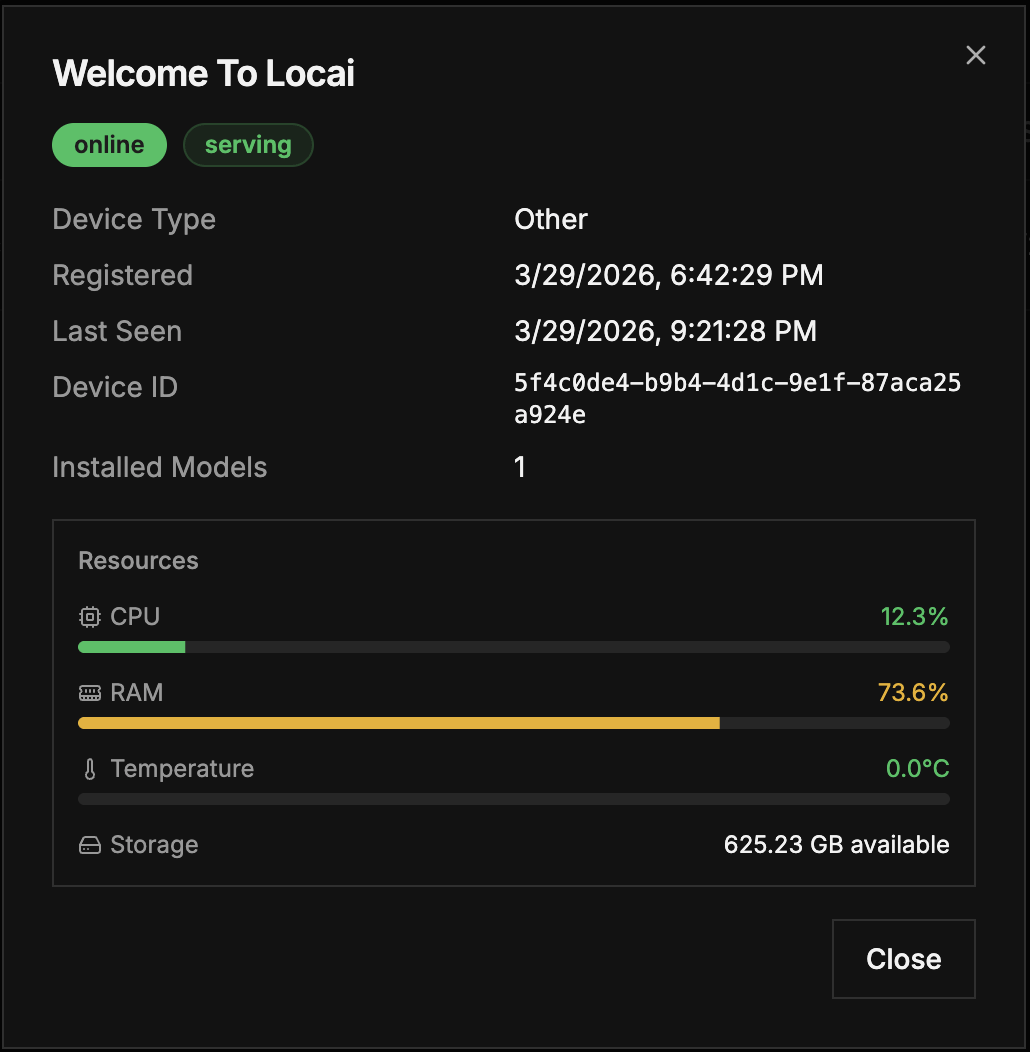

Once your device is registered and connected, deploy a model from the Model Library:

- Navigate to the Model Library in the Loc.ai dashboard

- Select a model (e.g., an image classification model or LLM)

- Click Action to open the deployment dialog

- Select your registered device as the target

- Review the deployment summary (model name, configuration, runner type)

- Click Deploy Model

The deployment dialog shows your device's current resource usage (CPU, RAM, temperature, storage) to help you choose an appropriate target.

Q4. Deploy Model dialog – select target device and review deployment summary

Run inference

After deploying a model, you can start inference directly from the dashboard:

- On the Deploy Model page, the Deploy button will change to Start Inference

- Click Start Inference to begin processing

- The model will run locally on your edge device and send results back to the platform

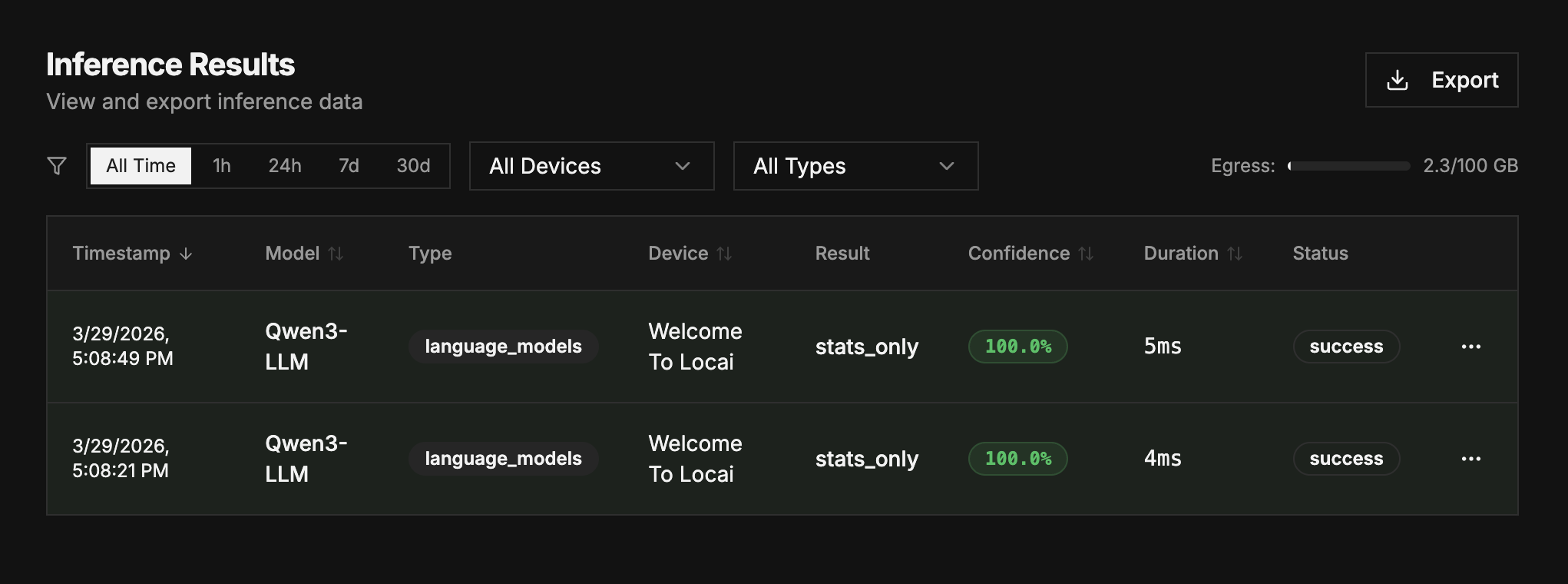

View and export results

After running inference, results are sent back to the Loc.ai platform where you can view, filter, and export them.

Quick summary

The Inference Results card on the dashboard shows a summary of recent detections, including the classification result and the device that produced it.

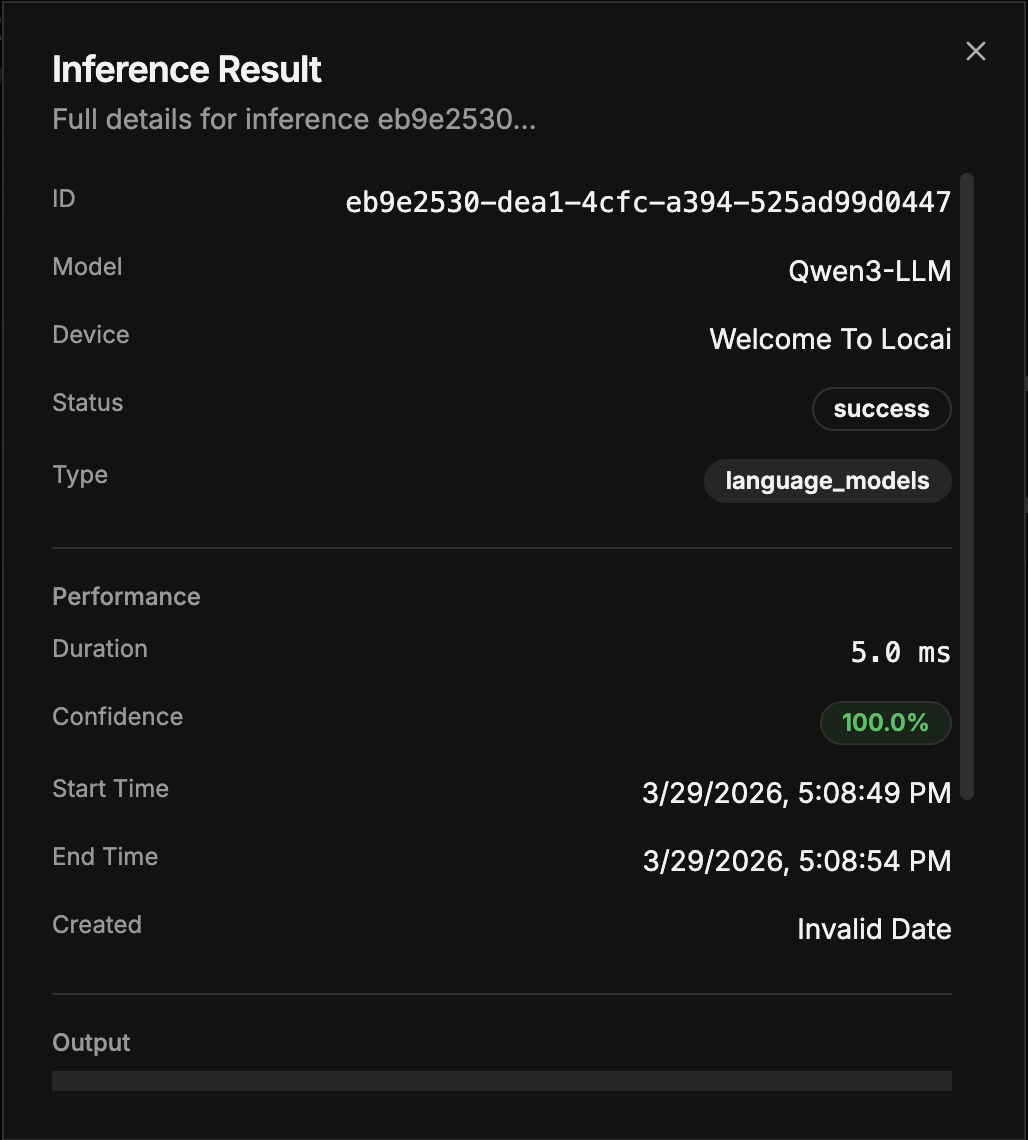

Detailed results

Click Detailed Results to expand the view with a full table including:

- Model – The model used for inference

- Device – The device that ran the model

- Result – The classification or output

- Confidence – Confidence score as a percentage

- Duration – Time taken for the inference

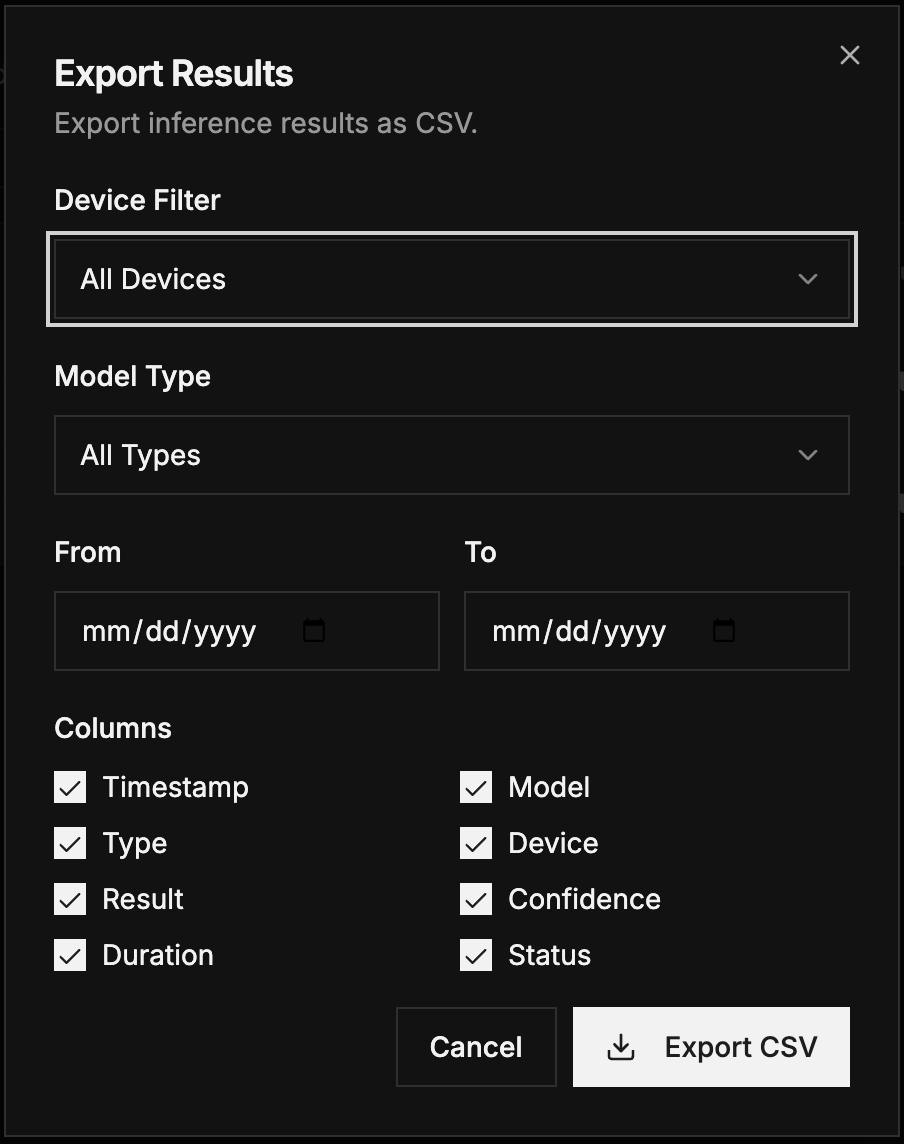

Exporting results

Export your inference results in CSV format with flexible filtering:

- Filter by device, model type (image classification, audio classification, language models), and time range

- Select specific columns to include in the export (ID, Device, Model Name, Model Type, etc.)

- Preview your selection before exporting

Serving a model

Models served via Loc.ai are OpenAI API compliant. Serving lets you build applications that call APIs on user devices using ML models running locally — lower cost, greater security, and lower latency.

Deploy and serve a language model

- Deploy the language model using the same steps in Deploy your first model (upload a GGUF model or use the Model Library)

- After deploying, instead of Start Inference you will see Serve Model

- Click Serve Model and enter a port number (default:

8100)

If the port is already occupied by another served model or a process on your machine, you will receive an error message. Select a different port to resolve this.

Accessing your served model

After serving successfully, access through any of these URLs (replace xxxx with your chosen port):

http://localhost:xxxxhttp://0.0.0.0:xxxxhttp://127.0.0.1:xxxx

Since served models are OpenAI-API compliant, you can integrate them with compatible applications. See Integrations for examples.

Supported models

Loc.ai currently supports:

- Image Classification — TFLite models for real-time image classification on edge devices

- Audio Classification — TFLite models for audio event detection and classification

- Language Models (LLM) — GGUF format models via llama-cpp for text generation and chat

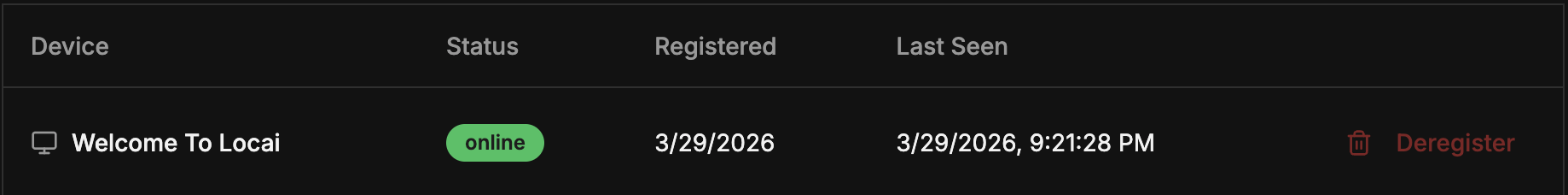

Device management

To unregister or delete a device from your Loc.ai account:

- Navigate to the Registered Devices card in the dashboard

- Click Details under the device you want to manage

- Use Edit Device, Create Recovery Key, Rotate API Key, or Delete Device

If you want to reuse a deleted device, you must clear the config files on the device first:

uv run manager.py reset --hard

Troubleshooting

| Issue | Solution | Platform |

|---|---|---|

| Permission denied | Ensure the script is executable: chmod +x install.sh | Linux/macOS |

| Python/Pip errors | The installer uses uv to manage Python versions. Avoid conflicting Conda or virtual environments before starting. | All |

| Connection refused | Check firewall settings so the device can make outbound HTTPS requests to the Loc.ai control plane. | All |

Next steps

- Architecture overview — How components work together

- Glossary — Key terms and definitions