Open WebUI

Open WebUI integration

Build a private chatbot using Loc.ai and Open WebUI — privacy, no subscription fees, full control.

What is Open WebUI?

Open WebUI is a self-hosted chat UI for LLMs with a ChatGPT-like experience in the browser, talking to any OpenAI-compatible API.

How it works

- Loc.ai — Deploys and serves the model on your hardware (PC or VM).

- Open WebUI — The browser UI on your main computer.

They talk over your network.

Step 1: Connect your device

- Log into the Loc.ai dashboard → Devices

- Click Add Device and copy the

curlcommand - Run it on the machine that will run the model:

curl -sSL https://raw.githubusercontent.com/locai-co-uk/locai-link/main/install.sh | bash -s -- \

--device-name "YOUR_DEVICE" \

--username "YOUR_USERNAME" \

--registration-key "YOUR_KEY" \

--start-running

When registered, the device shows Online.

Step 2: Upload and deploy

- Go to ML MODELS

- Upload a GGUF model (e.g. quantised Qwen) or pick from the library; set type Language Model

- Deploy to the device from step 1

Step 3: Start serving

- Open the deployed model in the dashboard

- Click Start Serving and pick a port (e.g.

8123)

info

Save the device IP and port for step 5.

Step 4: Launch Open WebUI

pip install open-webui

open-webui serve

Open http://localhost:8080 in your browser.

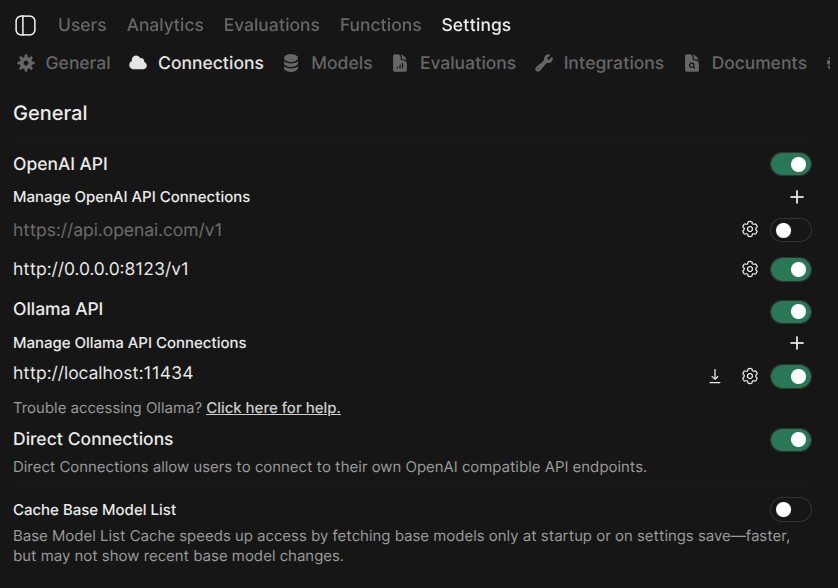

Step 5: Connect to Loc.ai

- In Open WebUI: profile → Settings → Admin Settings → Connections

- Under OpenAI API, add a connection

- Set API Base URL to

http://<YOUR_DEVICE_IP>:<PORT>/v1

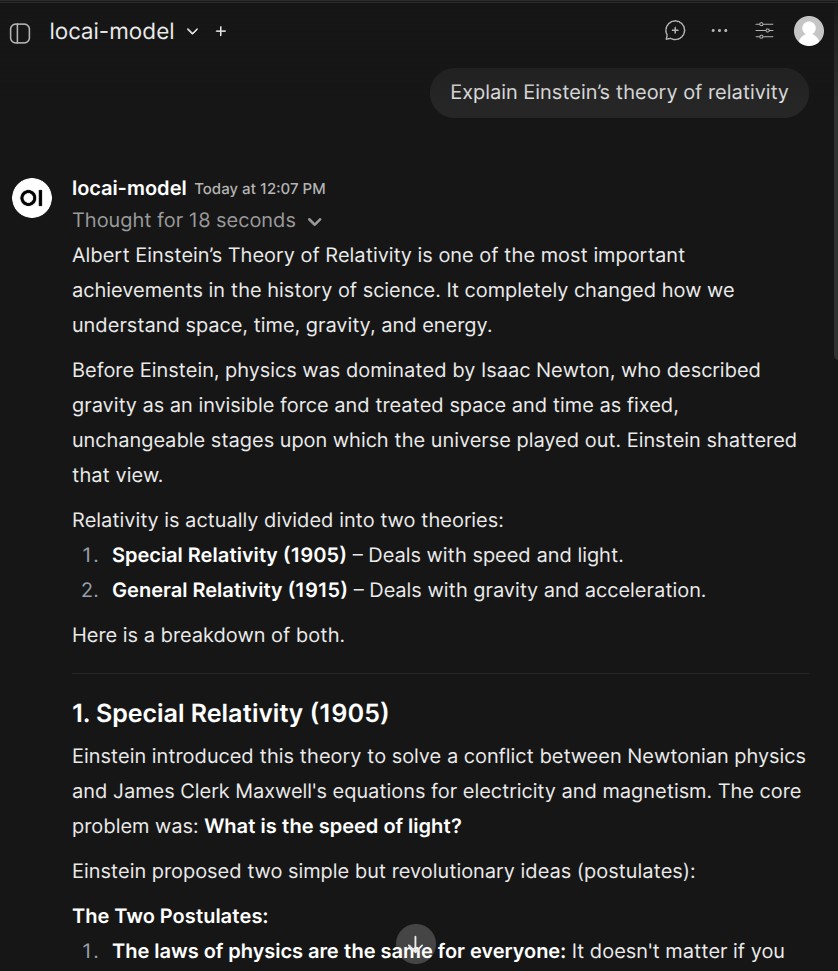

Step 6: Start chatting

Choose your Loc.ai model from the model dropdown and chat.

tip

Add multiple connections for multiple served models on different ports.