VS Code

VS Code integration

Author: Aleksander Lovric

Integrate your editor with locally deployed language models using Loc.ai and the Continue extension for VS Code.

What is Continue?

Continue is an open-source AI assistant for VS Code and JetBrains. It supports chat, explanation, refactoring, and Tab Autocomplete on your hardware.

Serving models via Loc.ai lets you run inference on a dedicated edge device while you code on your main machine.

Prerequisites

- Loc.ai account — Register your account

- A device registered — Register your first device

- VS Code with the Continue extension

1. Connect your device

Register your device with Loc.ai. See the device registration guide.

- Open the Devices tab in the Loc.ai dashboard

- Click Register Device (or Add Device) to open the registration flow and generate your registration command

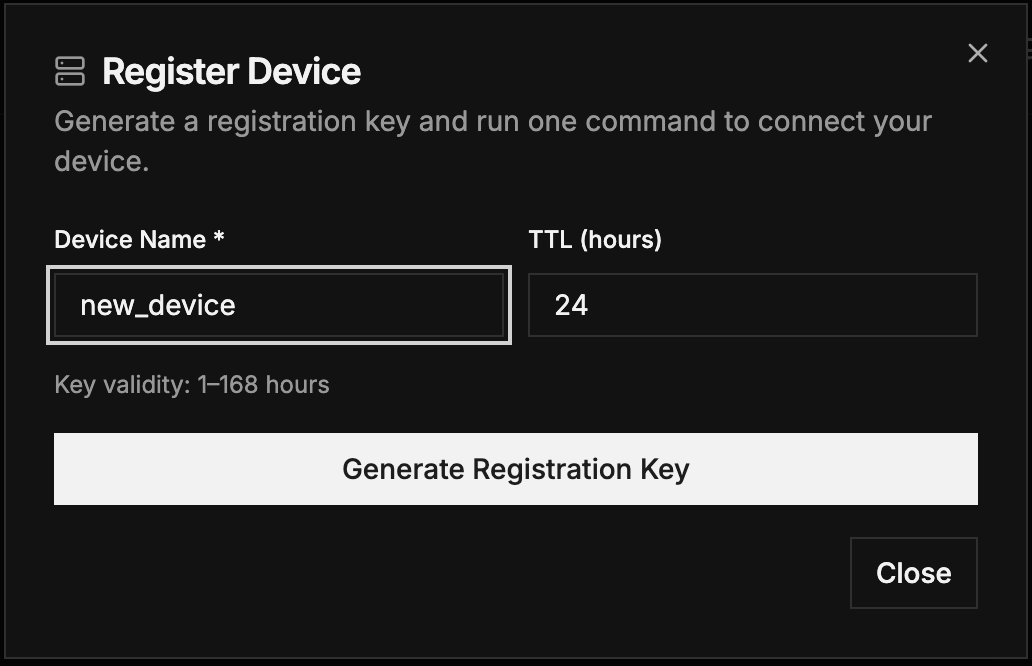

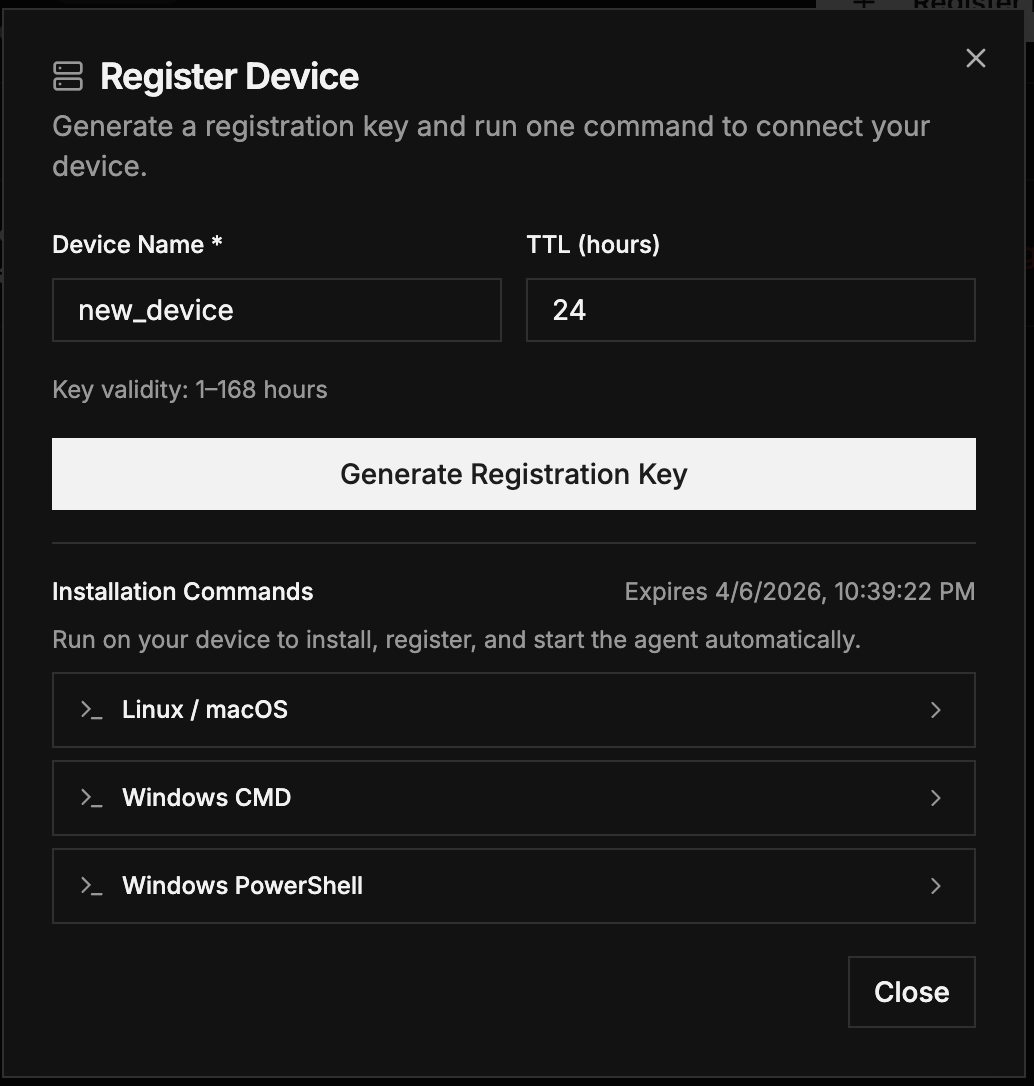

Q2. Register Device popup – enter device name, TTL, and generate key (same as Quickstart)

Q3. Generated one-line installation command ready to copy (same as Quickstart)

Paste the provided curl command on the device terminal. When registered, the device shows Online.

2. Deploy and serve

Choose a GGUF language model — see Supported models.

- Upload a model or pick one from ML MODELS (e.g.

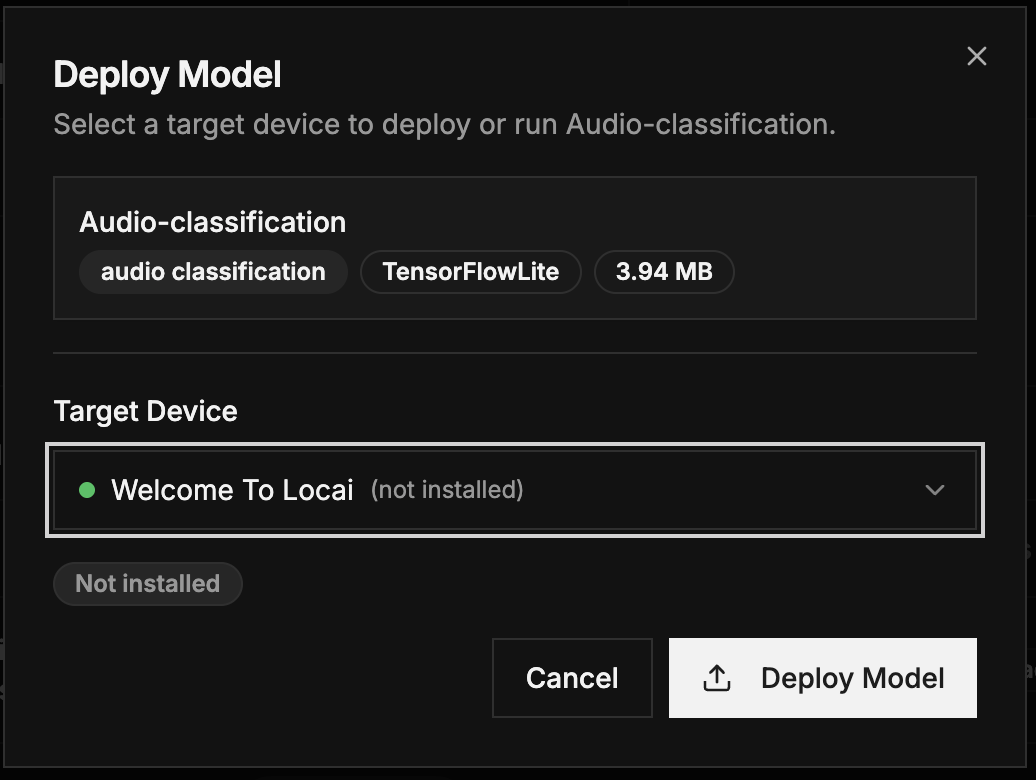

Qwen2.5-Coder-1.5B Q4_K_M.gguf) with type Language Model - Deploy to your registered device (same deployment dialog as in the Quickstart)

- After deploy, click Start Serving and note the IP address and port (see Serving a model)

Q4. Deploy Model dialog – select target device and review deployment summary (same as Quickstart)

Note the IP address and port from the serving panel for Continue configuration. See also Serving a model.

3. Configuration

In VS Code, open Continue → gear → config.yaml and add:

version: 0.0.1

models:

- name: chat_model

provider: llama.cpp

model: <YOUR_MODEL_NAME>

apiBase: http://<YOUR_DEVICE_IP>:<PORT>/

- name: autocomplete_model

provider: llama.cpp

model: <YOUR_MODEL_NAME>

apiBase: http://<YOUR_DEVICE_IP>:<PORT>/

roles:

- autocomplete

Replace <YOUR_DEVICE_IP> and <PORT> with values from the serving panel.

4. Usage

- Chat: Select your Loc.ai model in the Continue sidebar

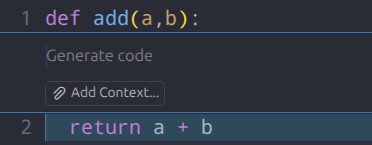

- Autocomplete: Grey inline suggestions; Tab to accept; Ctrl + . for inline actions

Multiple models on different ports can be configured as separate providers. See also OpenClaw.